Moodagent was a Danish music streaming service, known primarily for AI-powered personalised playlisting features based around mood.

I joined Moodagent shortly after it launched. My work stretched across different areas, working early on exploring new product features through end-to-end prototyping and later focusing on the existing product.

Music discovery through short-form videos

Video Playlists was a feature that enabled users to discover and share music through user-generated videos.

I was core to shaping the initial concept and prototypes, from sketches to functional app, as part of a small cross-functional team.

Challenge

Moodagent had introduced basic social features as part of its launch, such as a feed for sharing playlists, and wanted to improve on this area of the product.

The founders challenged our team to prototype a novel experience for social music sharing by tapping into existing habits around short-form video creation and consumption.

The goal was to create a solution that could tie into the existing social features of the platform, utilise Moodagent's existing technology, and drive sharing.

Finding direction

I did research on different music streaming platforms and how they handle sharing, specifically in and around messaging apps and short-form video apps. I explored different opportunities on how to integrate with these, like custom covers, stickers and video overlays using Moodagent's iconography and visual language around mood. We ultimately left this as we found the integration capabilties too limiting for expressing this.

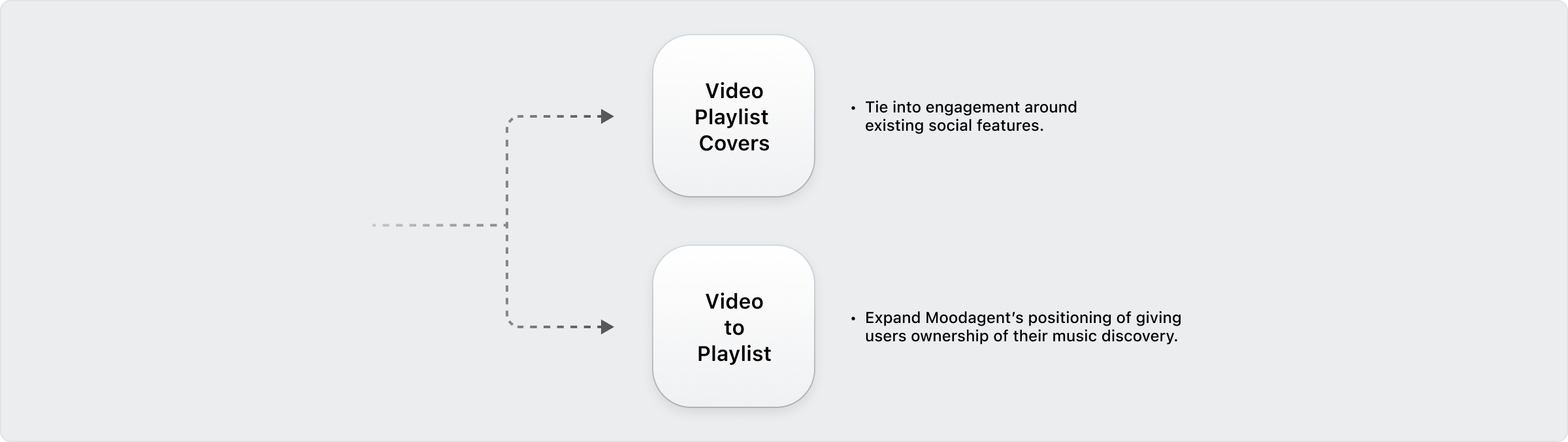

I then started exploring using video in relation to sharing playlists within the app. We knew that many users already use custom playlist covers to communicate the mood of their playlists, which led us to "Video Playlist Covers", letting users create custom videos for their playlists.

When I started exploring how to do video capture within the app, I got the idea of using the video as the foundation of the playlist creation, instead of simply a representation of it. This led us in the direction of a "Video Playlists" feature, letting users create playlists by capturing videos.

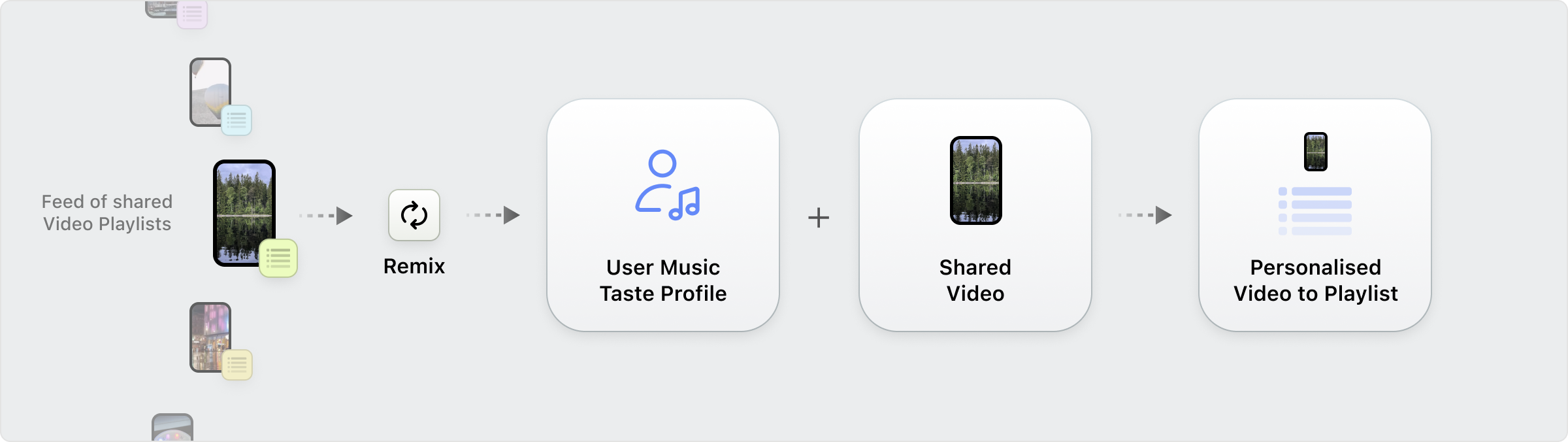

When exploring this idea further, we found that it could introduce interesting social loops within the app, like remixing:

This led us to two distinct directions: either tie into the existing social features with Video Playlist Covers, or expand on the social features by creating a new way for users to discover and share music. Both directions were prototyped with simple clickable prototypes, and demoed for leadership.

Ultimately, we decided to further explore the Video Playlists feature, as it aligned more strongly with Moodagent's core focus of giving users ownership of their music discovery, and offered the social features a more meaningful expansion than improved covers alone.

Prototyping a realistic experience

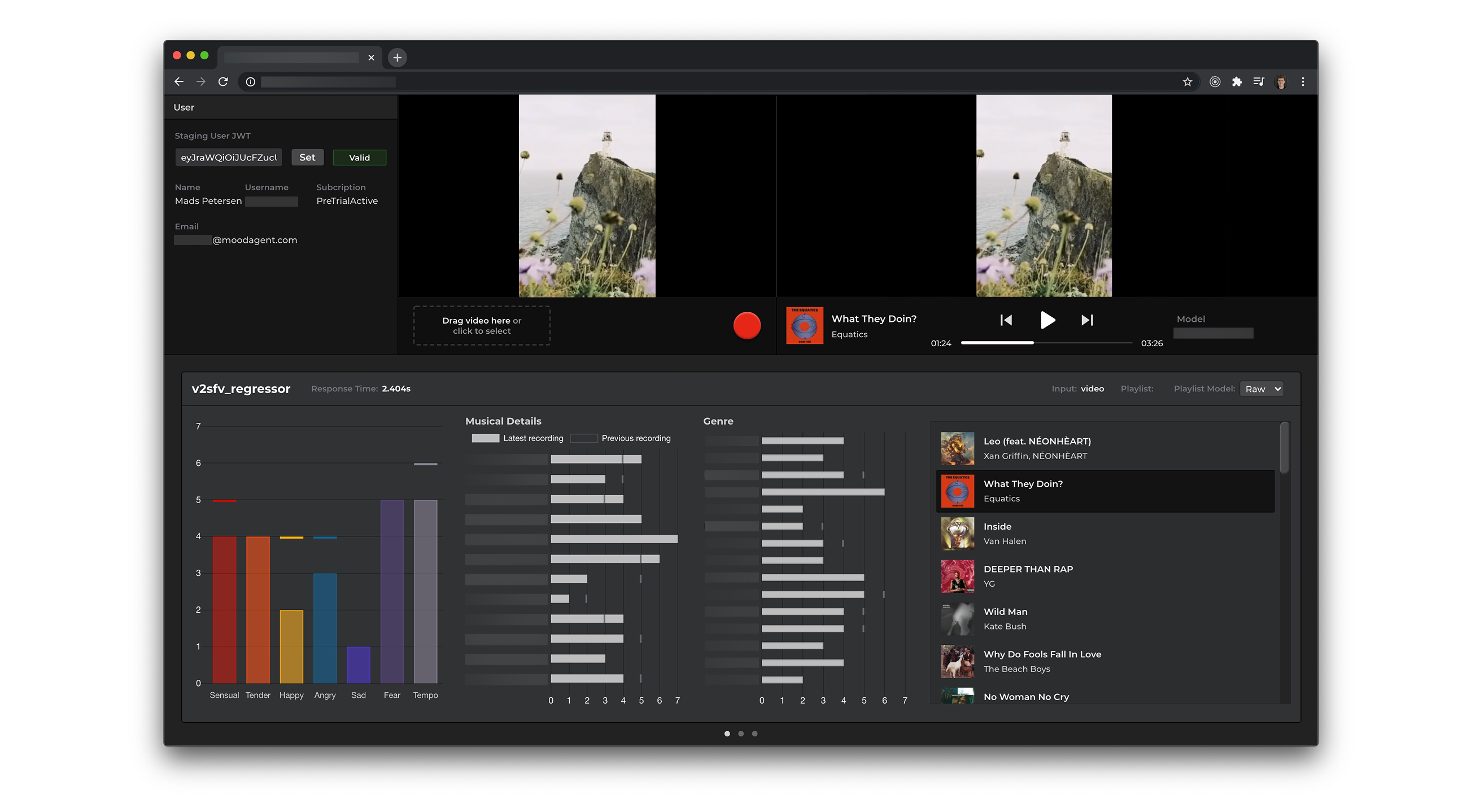

After deciding on a direction, we built a functional prototype of the Video Playlists feature. The initial prototype was done quickly and was quite rough, but functional. I built upon some existing frontend code and API endpoints, while video analysis was built in collaboration with another engineer.

We prioritised using live data, as this allowed participants to use their own accounts (ie. their music taste) combined with the ability to play music from the production music catalogue to get a realistic experience for testing.

Testing

After building a functional prototype we shared it with participants for testing. We emphasized testing it in different settings and environments. Below are some key findings.

Key findings

-

Users were reluctant to share Video Playlists that clashed with their personal aesthetic (visually and musically), mirroring how people curate differently for public vs. private audiences.

-

Misalignment between what the user filmed and what the analysis focused on caused frustration — eg. analysing the background instead of the person they were filming.

-

Showing analysis feedback directly after capture was key. By doing so, users were able to learn how the analysis worked, which kept them engaged and reduced frustation.

Outcome

The feature was launched internally by our team as a private beta and tested with users. After, it was greenlit by leadership and handed off to a dedicated team for further testing and development.

Additionally, the concept led to a company patent for matching music to short-form videos, combining Moodagent's existing technology patents with video content analysis.

Tuning music matching

Parallel to the project I built a tool for evaluation and comparison of internal video-to-music AI models and for collecting human feedback for model training. This grew into a tool used by several teams as the feature transitioned from prototype to production.